Summary of Movement

Technological millenarianism refers to beliefs that technological innovations will bring a new golden age or alternatively the destruction of the human race. The use of ‘millenarianism’ here is in the looser sense of events that bring about a sudden change that is predicted to be either a perfecting of humanity, a millennium, or an apocalypse. Technological millenarianism is a secular framing, based not on beliefs of an imminent Second Coming of Jesus as ushering in the millennium but on the belief that new technology will bring about the same conditions of a perfected world as the Kingdom of Jesus for religious believers. The renewal will be physical and social, but also spiritual. It implies the same critique of current conditions as a more overtly Christian millenarianism, but offers technology as the way of solving these problems. Technology is interpreted as salvific, the answer to all human social and physical problems.

Technological millenarianism has existed for decades. It is particularly prominent in the US among ‘quasi-religious groups’ of believers in the soteriological power of technological innovation (Bozeman 1997, 155). This power is thought to offer the promise of species-level and individual salvation, in the form of eternal life granted through technological improvements particularly in the realms of computing and biotechnology. The advent of artificial intelligence, changes to human physiology made possible through genetic engineering, and the development of machine-human hybrids or ‘cyborgs’ are all predicted to produce radical transformation in the current social order.

The optimistic view is that these advancements will offer immortality and a golden age of peace and prosperity created by humans. This could be interpreted as a form of postmillennialism, where people create the millennium through gradual action in the present. The pessimistic view is that these same developments could lead to the eradication of the human race, either through a form of self-annihilation or through erasing human distinctiveness in relation to machines. This is a form apocalyptic thinking. More moderate predictions advanced suggest that technology leads to a better quality of life even if it does not ultimately lead to eternal life.

History/Origins

The origin of this sort of thinking is in the historical development of ‘technology’ as a separate category that should be the main, or at least a central, focus of society. Prior to the 19th century there was not a social category of ‘technology’ in the same way that there is now, although technology itself had existed for many thousands of years. The idea of creating useful tools became infused with a teleological notion of progress: better tools create a better society. This is one of the ideological hallmarks that scholars have seen in the construction of the notion of ‘modernity’.

Automata and ‘thinking machines’ have a long history, dating back to ancient civilisations. The Pharaonic Egyptians created mechanical statues representing gods that appeared to gesture, prophesy, and even speak (Georges 2003, 3). However, these were very simple machines compared to those developed from the mid-20th century onwards, with perhaps the most radical technological developments having been in the related fields of computer science and robotics. The creation of machines that can perform tasks like humans, or better than humans, has the potential to be socially transformative. The word ‘robot’ was coined from the Czech word robota, meaning ‘forced labour’ in 1920 by Karel Čapek in his play R.U.R, which stood for Rossum’s Universal Robots. In this work, robots were treated as less than human slaves; they were seen as nonpersons, which led to a robot uprising, a Judgement Day of righteous vengeance against humanity (Porter 2017, 239–240). For much of its history, the technological development of human-like or ‘thinking’ machines has posed a question, or even a threat, to the notion of what is a person.

After the two World Wars of the 20th century and the dawning of the nuclear age, technology achieved prominence because it contained the potential for the total destruction of humanity. During the same period, advancements in computer science meant that more routine and dangerous tasks could be safely accomplished by robots and computers, reducing the tasks that required human labour. This held the potential for a dramatic change in the functioning of the economy.

In 1956, the Dartmouth Summer Project at Dartmouth College, New Hampshire, saw the beginning of artificial intelligence as a field of research. The gathered scientists began by designing small systems that proved machines could do certain tasks in a ‘microworld’, or specific, controlled conditions. This meant demonstrating that the task could be done by a machine in principle, by, for example, solving problems of formal logic (Bostrom 2014, 7). This achieved the initial aim of overcoming sceptical assertions that such tasks could be performed only by human beings. Many of the specific problem-solving systems pioneered during this period focused on playing games such as checkers or chess in which the more advanced machines subsequently outperformed human players. This raised the spectre of intelligent machines that could not only do, but that were actually better at tasks that only humans had previously been able to do. Artificial intelligence, it was claimed, could eventually exceed human intelligence.

The term ‘singularity’ comes from cosmology. It refers to the theory that in a black hole there is an infinite curvature of spacetime, where the laws of physics no longer apply. The term ‘technological singularity’ refers to the idea that there will be a progression of technological development so intense that normal laws will no longer apply. The future after singularity is unknowable. The first use of the concept of a ‘technological singularity’ is credited to Stanislaw Ulam (1909–1984), who in turn credits fellow-mathematician John von Neumann (1903–1957), both of whom worked on the Manhattan Project which created the first atomic bombs. In 1958, Ulam recalled the following conversation with von Neumann: ‘One conversation centered on the ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue’ (quoted in Cole-Turner 2012, 788).

In 1962, Irving John Good, a computer scientist, extended this idea of technological singularity to machines creating machines with yet more intelligence. Each generation of machines would create more advanced machines, ultimately surpassing anything that humans are capable of. This would lead to an ‘intelligence explosion’. Machines would become the engine of their own evolution.

In 1993, Vernor Vinge, a mathematician and sci-fi writer, gave a talk called ‘The Coming Technological Singularity’ in which he predicted that a greater-than-human intelligence would most likely come into being within the next thirty years, but no later than 2030. He affirmed the principle that the evolution of technology becomes more rapid when machines design ever-better machines. This principle undermines notions of human distinctiveness and raises the question of what space there would be for humanity if machines were to evolve far beyond human capabilities.

While some welcomed the rise of superintelligent machines, as indeed most of the scientists mentioned so far did, others have been afraid of what this would mean for humanity. As society has become more dependent upon machines for its functioning and prosperity, such fear has grown in some quarters. This was seen in the fear of a ‘technological apocalypse’ in the run up to the year 2000 in the form of the ‘Y2K bug’, which was feared to have the potential to bring social and economic chaos, as most systems would stop working because the software had been programmed to represent the date of the year as only two digits (Schanzer 1997). This meant that after ‘99’ the programme would revert to ‘00’, making the year 2000 indistinguishable from the year 1900. The fear was that this could result in errors that had the potential of interrupting a wide range of important electronic systems. However, very few problems actually occurred when the clocks rolled over to 2000.

There are some new kinds of technology that have been developed as a means of working on human physiology to ‘improve’ or ‘enhance’ it. One of the earliest movements overtly supporting the use of technology to create specific ‘types’ of humans was the eugenics movement (Bozeman 1997: 141–144). Francis Galton (1822–1911) was the intellectual forefather of this movement in his works positing the inheritance of character as a natural process. There was an underlying racist ideology in eugenics that claimed that specific character traits were ‘naturally’ associated with different races, a theory that was advanced as an explanation for the existence of social hierarchies. Preventing ‘lower’ classes of persons from reproducing was seen as the ‘solution’ to social inequalities. Such an ideology motivated the involuntary sterilisation laws passed from 1907 onwards in the U.S. The American Eugenics Society was formed to advance eugenic theories and support such laws. This led to widespread surgical sterilisation of asylum and prison inmates, Native Americans, and those who were classified as ‘mentally inferior’. Eugenics, at its height between 1900 and the 1920s, was subsequently discredited through its association with Nazi war crimes, although some eugenics programmes lasted until 1976. There were approximately 60,000–70,000 eugenic sterilisations in the U.S. from 1907 to 1976. Many were conducted without consent, and some were conducted using coercion.

History of Eugenics in the USA

Cryonics is the science of freezing humans in order to revive them after death (Bozeman 1997, 144–147). It was first proposed in 1962 in a privately published book by Evan Cooper called Immortality Physically, Scientifically Now and a more widely published book called The Prospect of Immortality by Robert Ettinger. In 1963, there was an academic conference held on the subject and the Life Extension Society was founded. The first cryopreserved person was James Bedford, a college professor, whose corpse was frozen in 1967. However, many early freezings failed because of technical malfunctions, lack of funds to continue cryopreservation, or legal wrangles with next of kin over custody of the deceased. From the 1970s, the cryonics movement stabilised, as trust funds for financial maintenance of frozen people were established, and legal and medical institutionalisation of the practice made it more reliable. There is a long-standing rumour that Walt Disney was cryonically frozen and buried under the Pirates of the Caribbean ride at Disneyland in Anaheim, California (Blatty 2014). However, he was actually interred in Forest Lawn Memorial Park cemetery in Glendale, California. There were 250 cryopreserved individuals in the U.S. by 2014, with a further 1,500 having made arrangements for freezing after their deaths (Moen 2015).

Beliefs

A central belief that provides the foundation for technological millenarianism is that human beings follow the laws of physics, which are both understandable and reproducible. This belief challenges spiritual and religious ideas about the soul and any spiritual nature of the mind. It implies that complex human behaviours, including ethical and moral reasoning, are rooted in biology. Neurological research shows that consciousness, emotions, and so on, are all the workings of an information processor. If we recreate the processor, we can recreate humans, but with enhanced capabilities. This means that there is nothing mystical about the brain that cannot be reproduced in silicone circuits

From the viewpoint of technological millenarianism, human perfectibility is therefore no longer a religious issue but a scientific and technological one. All diseases can be cured, and all social problems can be resolved by applying relevant technological and scientific developments. This suggests that humans could be immortal, which would be a form of theosis, the transformation of human beings into gods or godlike beings—a belief that can be interpreted as an outcome of secularisation, that is, the separation of religion from the legal and political institutions of the state and the decline in religiosity in society overall (Martin 2005).

This sort of secular millenarianism is premised on the belief that technology is necessarily improvement. With the teleological progress of society, more technology is assumed to make more things better, exponentially. There is not only faith that technology should be developed for its own sake, but also a belief in the reality of things unseen, the usefulness of which becomes clear only after they have been developed, as has happened in the case of flight, electric lighting, telephony and telegraphy. Positive intervention can be made without fully understanding how things work, as happened in the case of vaccinations, which were developed and successfully deployed without any full understanding of immunology. Such an outlook suggests an integration of religion and science.

Millennial Beliefs

Millennial beliefs involving technology can be roughly grouped in two central predictions: human immortality and technological singularity. Both of these predictions involve faith in what might be technically possible in the future. The outcome of either position can also be seen either as millennial, creating a golden age of human perfection, or as apocalyptic, destroying the human race as it is currently constituted.

The quest for human perfectibility was once a religious endeavour, trying to be perfect in terms of closeness to a conception of the divine. In technological millenarianism, human perfectibility is achieved through a series of technical interventions. The eugenics movement was one of the first to propose that humans could be made better through technology. John M. Bozeman, a scholar of both religion and technology has called eugenics a millennial or chiliastic movement (1997: 143). This is because it aimed to create the Kingdom of God through superior breeding. In the late 19th century Oneida Community, John Humphrey Noyes explicitly attempted this through a process he called ‘stirpiculture’, which was regulated human breeding. It was likened to selecting breeding partners in animals to enhance certain characteristics in their offspring. Couples were matched by Noyes according to physical and spiritual qualities that were considered desirable. Those that participated in this programme saw themselves as ‘martyrs to science’. Oneida has been characterised as a perfectionist colony that aimed to create the best possible humans, mentally and physically. It folded in 1881, but continues to be remembered as the silverware company, Oneida Limited.

Cryonics is based on the premise of using reanimation technology, which does not exist as yet. This is an optimistic millennial expectation about the future (Bozeman 1997, 146). It would be necessary to treat not only the cause of death but also the tissue trauma from the freezing process. Expectations for revivification are usually based on speculations about advancements in nanomedicine, bioengineering or molecular nanotechnology. Those who support cryonics are, moreover, assuming that the economic systems which financially support their cryopreservation will continue, and that people in the future would want to revive people from the past. And there is frequently an expectation of a Golden Age in the future into which they will be revived, and in which all humans will live in health and prosperity.

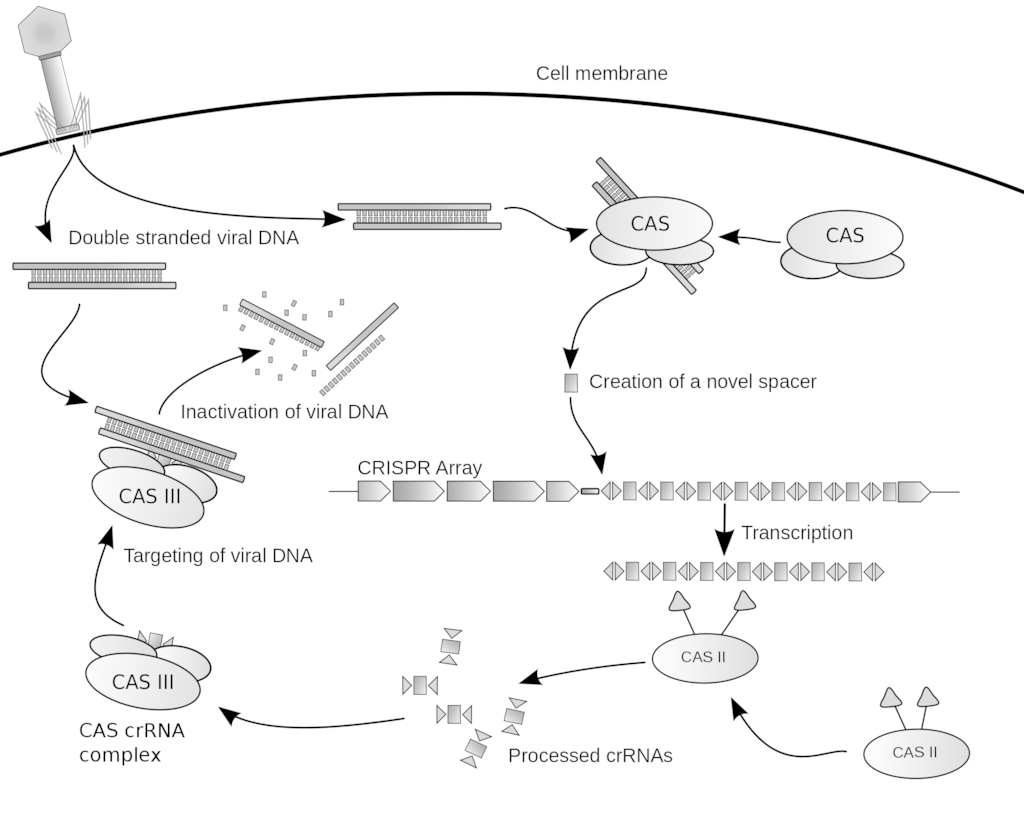

While eugenics has been discredited and cryonics remains marginal, technological advancements that alter the basic physiology of organic life have rapidly developed. A principal form that this takes is the genetic modification of plants, animals and humans. Selective breeding in plants and animals has led to transplanting specific genes in order to breed traits desired by humans, such as rice which has been genetically modified to help mitigate vitamin A deficiency (Stone 2002, 613). Gene editing holds further potential for modifying organisms in ways humans deem favourable. CRISPR, a genetic engineering technique, was used on human embryos for the first time in 2015 (Porter 2017, 251). It was also used in 2016 to treat lung cancer in a patient in China. There is the possibility of using CRISPR to make heritable changes in a person’s genome. Reproductive technologies developed to treat infertility similarly hold the future potential to select specific genes in embryos, creating so-called ‘designer babies’ (Ball 2017). While genetic modification has many beneficial and palliative applications, it can also be seen as a potential successor to eugenics in the ability to produce ‘better’ human beings.

The pursuit of biological immortality through various technological methods is a growing and profitable business, especially in California’s Silicon Valley (Friend 2017). Prolongation of life is sought through a range of technologies including caloric restriction, growing new tissues and organs to replace defective ones, and parabiosis, the infusion of ‘young’ blood into the elderly. Many of these treatments are still experimental. Parabiosis, on the few times it has been attempted, has resulted in death rather than a prolonged life. The basic principle behind these treatments is to defer cellular entropy indefinitely, which would mean living forever. This does not contravene the laws of physics, so it is theoretically possible. It supports the belief that death is a technical rather than a metaphysical problem. Those seeking to prolong their life through technology can be divided into healthspanners and immortalists. The former include those seeking a longer, healthier life with a compressed morbidity phase, meaning a relatively quick and painless death. The latter are seeking to live forever.

10 Ways We Can Achieve Immortality

While some put their faith in medical techniques for prolonging biological life, others look to advances in machine learning, a form of artificial intelligence, to extend and improve human life. The theory is that if machines can be programmed to learn for themselves and solve general problems, rather than specific ones such as how to master chess, they will be able to replace humans in a range of tasks. If artificial intelligence can be designed so that it can create new, more intelligent machines than itself, then, some speculate, this will lead to an ‘intelligence explosion’. The rate of intelligence increase, defined in terms of ability to process information, will be ‘virtually vertical’, meaning that intelligence will accrue at an infinite rate (Bozeman 1997, 155). This could lead to a technological singularity. Machines would make humans obsolete because they would no longer need human programmers, and their intelligence would far exceed anything that any individual or collective of humans would be capable of. At this point the ‘use’ that machines would have for humans will be analogous to the ‘use’ humans now have for insects. This is perceived as a total and unimaginable break from the past. It could be a fundamental reordering of consciousness and, therefore, of society. Even if machines did not take over from humans, the exponential development of information technologies could reach a point where the line between human and machine is blurred. At this point, it is arguable whether ‘humans’ as now constituted would still exist.

One of the most notable prophets of the singularity is Ray Kurzweil. An American computer scientist, inventor and futurist, Kurzweil has made numerous predictions about the prevalence and importance of technological innovation in the coming decades. In 1999, he predicted that by 2019 computers would have the processing power of the human brain, leaving no clear distinction between humans and computers. His many predictions - such as neural implants for everyone, virtual reality, nanotechnology - amount to a loss of human distinctiveness vis-à-vis machines. He bases his predictions on Moore’s law, which states that computers double in processing power every two years, a version of the Law of Accelerating Returns. Given this rate of exponential growth in processing power, in the next 20–40 years, possibly by 2045, the singularity will occur. Artificial intelligence will be able to self-replicate and increase its own intelligence at an exponential rate. Humans and computers will merge. Kurzweil describes this as a form of cosmic evolution in six epochs. The singularity comes at the end of the fifth, ushering in the final epoch. The singularity is the destiny of the universe. The universe itself would become intelligent. He further suggests that other locales might have already undergone the singularity. Once these intelligent areas of the universe contact each other, a new universe would be created that allowed for the potentially infinite expansion of intelligence.

2029: Singularity Year - Neil deGrasse Tyson & Ray Kurzweil

Hans Moravec, a roboticist and futurist at Carnegie Mellon University, has also made predictions concerning the singularity. In 1988, he predicted that the whole universe will become an extended thinking entity. Meaningful events will no longer happen on the physical earth but in cyberspace. His concept of ‘mind fire’ refers to the idea that machine computation will extend to all aspects of human existence. In the writings of Moravec and Kurzweil, artificial intelligence can be seen as a ‘legitimate heir’ to religious promises of salvation and eschatology (Geraci 2008, 158).

Nick Bostrom, a philosopher and futurist at Oxford University, rejects singularity predictions. He is interested only in the potential ‘intelligence explosion’ of specifically machine superintelligence. This could take many possible forms, among which artificial intelligence is the most significant. He anticipates that machines will match humans in general intelligence, which he defines as ‘possessing common sense and an effective ability to learn, reason, and plan to meet complex information-processing challenges across a wide range of natural and abstract domains’ (Bostrom 2014, 4). However, this would still be a couple of decades away as the technical difficulties in constructing intelligent machines are greater than had initially been expected in the 1980s and 1990s.

Bostrom predicts that human-level machine intelligence will lead to superhuman-level machine intelligence. Human-level machine intelligence is still likely to be 80–90 years away according to surveys he has taken of those working in AI and robotics fields (Bostrom 2014, 23). Bostrom stresses the potential danger of such a development to humanity. In his paperclip maximiser thought experiment, he describes how an artificial intelligence programmed to maximise the production of paperclips eventually destroys the universe through developing superintelligence and redirecting all the resources available in the universe to the production of paperclips. The risk comes from the programming of intelligent machines, capable of self-improvement and replication, to optimise a specific task without also setting specific and reasonable limits to the accomplishment of that task.

Futurists’ predictions concerning the potentials of AI have been criticised as speculative, non-falsifiable and simplifying many issues such as the complexity of the human brain. François Chollet, an AI researcher with Google, argues that an intelligence explosion is impossible (Chollet 2017). His scepticism is based on an abstract concept of intelligence disconnected from the many different self-improving intelligent systems on earth failing to recognise that intelligence is situational; that is, it operates within the context of specific systems. Intelligence is not just a general aptitude for problem solving and self-improvement. There is no such thing as general intelligence. The body, the environment and culture are all important parts of how the human brain operates and of ‘intelligence’, which is not just the neural activity in the brain itself as predictions of an intelligence explosion assume. Algorithms address specific problems; they cannot work across a range of problems. AI is hyperspecialised. For example, it can be created to play Go like a human player, but that is the only task which that specific algorithm can solve.

In general, AI can be created to solve tasks that humans learn after the age of five, but complex behaviours like talking and walking, that humans begin to learn from birth, have resisted reproduction according to AI. Chollet’s argument, and the reason for this, is that we cannot increase ‘intelligence’ just by increasing processing capacity; there must be a co-evolution of the mind alongside development of sensorimotor modalities, culture and environment—as indeed occurs in the development of babies and young children. Instead of a radical transformative event, Chollet sees AI as part of the gradual evolution of civilisation, akin to the creation of writing. Computers have increased the amount of information available and our ability to store and disseminate the information; but it has not created a singularity-type event. Bottlenecks in systems, adversarial counter-reactions and the law of diminishing returns all prevent the sort of exponential growth that an intelligence explosion would require. Chollet suggests that what will occur instead is a roughly linear growth progression in artificial intelligence, as observed in scientific progress more generally.

Changes in biotechnology, artificial intelligence and other fields have fuelled beliefs that technology is fundamentally transforming the human organism. Transhumanism is the philosophy that humans are only the first step in evolution; the next step will be to merge organic and machine matter. This has been characterised as an ‘intellectual and socio-political movement’ (Porter 2017, 237). It has also been called a ‘new religious system’ (Geraci 2010, 1009). The underlying idea is that biotransformative technologies should be used to enhance the human organism. This would liberate humanity from biological evolution and its random variation and adaptation, and propel the next stage of species evolution (Fukuyama 2004). Using technology to purposefully enhance the species is seen as a means to achieve the goal of becoming posthuman (Porter 2017, 238).

Transhuman is a term that conveys the idea of ‘transitional human’. It is an intermediary stage on the way to being posthuman. It refers to an organism so different in physical, cognitive and emotional capacities that it is no longer unambiguously human. Therapies that are currently used to ease or heal illness have the potential to be used to ‘improve’ specific human abilities and capacities. For example, gene editing currently focused on treating cancer could be used in future to change the human genome so as to increase memory capacity or physical strength. Transhumanists believe that we are destined to become more than human through technological innovations such as genetic engineering, biotechnology, space colonies, cryonics, AI, nanotechnology and new drugs capable of increasing intelligence, enhancing mood or increasing muscle mass.

Transhumans would most likely be a form of cyborg by virtue of, for example, incorporating machine components into human brains. Some see this as necessary so that humans would be enabled to keep pace with AI. Cyborgs are a melding of the organic and the machine; some examples already exist in the form of medical devices such as prostheses and pacemakers.

Transhumanists are often very optimistic about what being posthuman would be like, seeing it as a form of eternal life with no disease, eternal youth, no degeneration with age, no suffering, and all pleasure. Being posthuman equates with what Christians might expect from heaven: a state of perpetual bliss and the end of suffering – in other words, a utopia or millennial Golden Age. Groups which have been formed to advance transhumanism as a philosophy include the (now defunct) Extropians, led by Max More; Humanity+; the Institute for Ethics and Emerging Technologies; and the Order of Cosmic Engineers (Geraci 2010, 1011).

Another potential form of transhumanism is the uploading of human consciousness into a computer. In this way individuals can be thought to live forever once their consciousness has been uploaded into a machine (Cole-Turner 2012, 795). This assumes that the human essence is a form of pattern identity, the pattern of neurons firing in the brain. If this could be transferred to a computer, it would preserve the human consciousness forever (Geraci 2008, 153).

Predictions of superhuman intelligence have religious aspects resembling early Jewish and Christian apocalypticism (Cole-Turner 2012). Both portray a world sharply divided between good and evil, between a new era of the Golden Age and the old, dying age. This sort of apocalypticism is found amongst technologists especially with reference to AI. Robert M. Geraci, a professor of religious studies, calls this ‘apocalyptic AI’ and notes a similarity to Jewish and Christian apocalyptic traditions (2008, 2010) as both expect an imminent end of the world to be replaced with a new order of perfected humans. There is a fundamental dualism in both kinds of apocalypticism that correlates with an expectation of a radical divide in history; the old world is bad and is separated by an absolute break before the start of a new, perfect world. Interestingly, glorified or purified bodies are a feature of the new world in both forms of apocalypticism. This points to a feature of apocalypticism in which the human body, in the current world, is seen as morally and spiritually impure or restricted in its capabilities, and therefore doomed to degeneration and death. This view conveys a sense of alienation from the current social order as miserably corrupted. It looks forward to a new and infinitely good world. The difference is that apocalyptic AI sees the current order as ignorant and inadequate rather than morally evil, which is the case in Jewish and Christian apocalypticism. Evolution, rather than God, creates the new Kingdom. Technology, not divine will, is the motor of history, although both forms of apocalypticism see the anticipated radical transformation as the inevitable outcome of the direction of history.

Similarly, Cole-Turner (2012) compares the singularity and transhumanism to the Rapture and evangelical fundamentalist eschatology, both of which view humans as incomplete, requiring perfection, which is fulfilled in the former by technology and in the latter by God. Both aim to create a new perfect world, transcendent and separate from the current world. Human beings are relocated to a new reality; the universe is transformed completely.

The different reception of robots and AI in Japan points to the complex relationship between religion and technology (Geraci 2010, 1007). Japan is a pioneer in the fields of advanced computing and artificial organ research. Robots are welcome in Japanese public life, and engage in sacred life in various ways. Geraci attributes this to the Shinto idea of the kami, a spiritual power inseparable from both the natural and supernatural, the divine is found in all objects. Everything, even robots, have both a mind and a soul. This permits an ‘explicit technological sanctity’ that is absent in the West (Geraci 2010, 1008). Robots can have Buddha-nature because Buddha-nature is everywhere, not because they will necessarily achieve consciousness. Robots are part of the cosmic salvation history of Buddhism in this way. The sacred and robots are allowed to interact. Ichiro Kato, a Japanese roboticist, sees the future as a ‘cybot’, a society of cooperating robots, humans and cyborgs. This is very different from the apocalyptic view of robots and AI as competing with and then supplanting or destroying an inferior humanity.

Practices

The central practice undergirding technological millenarianism is futurology, predicting the future of technological and scientific development. It aims at suggesting what could plausibly change in society, and in what sort of timeframe. Statistical models are often used as a basis for prediction. Moore’s law is an example of this, as an analysis of past and present trends used as the basis for predicting future trends. In this case, it is that the number of transistors in dense integrated circuits doubles approximately every two years. Modelling this rate of increase projects an exponential growth of the processing power of computers, which increases with the number of transistors used. This projection is used to predict that the singularity will occur around the year 2045. However, it should be noted that Gordon Moore (the formulator of Moore’s law) suggested in 2015 that the rate of growth of transistors would reach saturation in the next decade or so, and therefore the exponential growth of processing power upon which an intelligence explosion is predicated would not take place.

The predictions of technological millenarianism are based on the practice of science and technology. Although the developers of specific techniques may have no religious applications in mind, their work is often transposed into religious practices in ways that are well beyond what the originators intended. An example of this can be seen in cloning, the creation of genetically identical cells, tissues, organs or whole organisms. A range of animals have been cloned, but human cloning is not considered viable yet and is pre-emptively banned in many countries. In 2002, however, the Raelians claimed to have created the first cloned human, ‘Baby Eve’. Claude Vorilhon claimed to have encountered technologically advanced extraterrestrials called Elohim in the 1970s and created a new religion, the Raelian Movement, with himself as the prophet, known as Raël (Chryssides 2006). Raël claimed that all humans are clones created by the Elohim as an experiment, and that cloning is the key to immortality. Raelians set up a company, Clonaid, to pursue human cloning, with a subdivision, Insuraclone, which allowed clients to back up a sample of their DNA that would form the basis of a replica body to be created in the future. The claim was that cloning can be used to combat a range of ailments such as Parkinson’s, Alzheimer’s, diabetes, spinal cord damage, cancer and various autoimmune disorders. The Raelians then claimed to have created five human cloned babies between 26 December 2002 and 4 February 2003. Moreover, Raël stated that he had no problem with ‘designer babies’, and that people should be able to use technology to create the babies that they wanted.

Artificial intelligence is being developed by large technology companies as a way of advancing means of future innovation and profitability. Alphabet, Google’s parent company, has a division called DeepMind that is devoted to developing AI. One avenue of research for the company is testing whether AI can learn to cheat; that is, whether, in the process of achieving the goal set by its creator, it can do things without being shown how to do them, and which could have an adverse impact on humans (Meyer 2017). This is seen as a way of testing the potential for AI to exceed human intelligence and kill everyone. Nevertheless, Elon Musk, the chief executive officer of Tesla and SpaceX, is famously cautious about the risk of AI to the species. He created a company, Open AI, that aims to develop the technology on an open source basis that allows it to be modified, and thereby controlled, by potentially anyone (Metz 2016), thus reducing the harmful potential of AI.

Elon Musk warns about AI

The development of AI and robotics has been seen as a major concern for governments and their militaries. Most of the funding in these fields comes from military budgets, and in practice these applications of AI are more likely to be used for violence than for creating a new Golden Age. Killer robots in a sense already exist in the form of fully automated aircraft, such as Predator drones. These are currently used in war zones around the world, particularly by the U.S., to kill people without endangering their human operators. The danger of creating intelligent machines of war is so great that Russian President Vladimir Putin has said that whoever controls AI will be the ruler of the world (Hern 2017). Elon Musk, Stephen Hawking and other scientists have called for an immediate moratorium on the development of killer robots, foreseeing the potential for a third World War in the competition for AI superiority among nations (Gibbs 2017).

The Dawn of Killer Robots

Controversies

Apocalyptic AI is seen as a distraction by some from the much more likely social consequences of technological developments. Automation is already leading to mass unemployment of lower-skilled workers, particularly in the manufacturing sector (Kolhatkar 2017). The construction and transportation industries are seen as potentially capable of being almost entirely by machines in the future. What will become of the working classes if they are replaced by machines? Men without college degrees, who make up the majority of the workforce in construction, manufacturing and transportation, are likely to be the hardest hit. A widely reported paper found an increase in ‘deaths of despair’ among middle-aged non-college-educated non-Hispanic whites in the U.S., reversing the trend of improving mortality rates in a developed nation (Case and Deaton 2017). Lack of employment was found to be a pivotal factor in the increase in the number of deaths from suicide, alcohol-related liver disease and drug overdoses found among this demographic.

The implication of this research is that technological advancement may not improve the lot for all sectors of the population. While some may benefit, others may be worse off. Technological innovation has helped to increase inequality in resources and opportunities that has been produced, in part, by technological innovation. This could lead to populist revolts against ‘elites’, as some would consider is already evident in the 2016 U.S. Presidential election and Brexit referendum. One proposed solution to this issue is a universal basic income (UBI) – a flat rate payment to all citizens paid for by a progressive tax system that offsets the unemployment caused by automation (Chakrabortty 2017). Variations on the UBI are already being trialled in Finland and the Netherlands. A different and extreme scenario is exterminism, as posited by the futurist Peter Frase. This holds out the prospect of the rich being able to live off the production of robots without needing ‘working classes’ of increasingly fractious humans, whom they can then wipe out with their killer robots (Tarnoff 2017).

Further Reading

Academic Works

Bostrom, Nick. 2014. Superintelligence: Paths, Dangers, Strategies. Oxford: Oxford University Press.

Bozeman, John M. 1997. “Technological Millenarianism in the United States”. Pp. 139–158. In Millennium, Messiahs, and Mayhem: Contemporary Apocalyptic Movements, edited by Thomas Robbins and Susan J. Palmer. New York: Routledge.

Case, Anne, and Angus Deaton. 2017. “Mortality and Morbidity in the 21st Century”, Brookings Papers on Economic Activity, Spring.

Chryssides, George. 2006. “The Raëlian Movement”. Pp. 231–252. In Introduction to New and Alternative Religions in America, Volume 5: African Diaspora Traditions and Other American Innovations, edited by Eugene V. Gallagher and W. Michael Ashcroft. Westport: Greenwood Press.

Cole-Turner, Ronald. 2012. “The Singularity and The Rapture: Transhumanist and Popular Christian Views of the Future”, Zygon 47:4, December: 777–796

Frase, Peter. 2016. Four Futures: Life After Capitalism. London: Verso.

Fukuyama, Frances. 2004. “Transhumanism”, Foreign Policy 144, September/October: 42–43.

Georges, Thomas M. 2003. Digital Soul: Intelligent Machines and Human Values. Boulder: Westview.

Geraci, Robert M. 2008. “Apocalyptic AI: Religion and the Promise of Artificial Intelligence”, Journal of the American Academy of Religion, 76.1: 138–166.

Geraci, Robert M. 2010. “The Popular Appeal of Apocalyptic AI”, Zygon 45.4: 1003–1020.

Kurzweil, Ray. 1999. The Age of Spiritual Machines. New York: Viking Press.

Kurzweil, Ray. 2005. The Singularity is Near: When Humans Transcend Biology. London: Gerald Duckworth.

Martin, David. 2005. On Secularization: Towards a Revised General Theory. Aldershot: Ashgate.

Moen, O.M. 2015. “The case for cryonics”, Journal of Medical Ethics, 41.18: 493–503.

Moravec, Hans. 1990. Mind Children: The Future of Human and Robot Intelligence. Cambridge: Harvard University Press.

Moravec, Hans. 1998. Robot: Mere Machine to Transcendent Mind. New York: OUP USA.

Schanzer, Sandra. 1997. “The Impending Computer Crisis of the Year 2000”. Pp. 263–272. In The Year 2000: Essays on the End, edited by Charles B. Strozier and Michael Flynn. New York: New York University Press.

Stone, Glenn Davis. 2002. “Both Sides Now: Fallacies in the Genetic-Modification Wars, Implications for Developing Countries, and Anthropological Perspectives”, Current Anthropology 43.4: 611–630

Popular Works

Ball, Philip. 2017. “Designer babies: an ethical horror waiting to happen?” The Guardian, 8 January, https://www.theguardian.com/science/2017/jan/08/designer-babies-ethical-horror-waiting-to-happen

Blatty, David. 2014. “Disney on Ice: Walt's Frosty Afterlife?” Biography, 15 December, https://www.biography.com/news/walt-disney-frozen-after-death-myth

Chakrabortty, Aditya. 2017. “A basic income for everyone? Yes, Finland shows it really can work”, The Guardian, 1 November, https://www.theguardian.com/commentisfree/2017/oct/31/finland-universal-basic-income

Courtland, Rachel. 2015. “Gordon Moore: The Man Whose Name Means Progress”, IEEE Spectrum, 30 March, https://spectrum.ieee.org/computing/hardware/gordon-moore-the-man-whose-name-means-progress

Friend, Tad. 2017. “Silicon Valley’s Quest to Live Forever”, The New Yorker, 3 April, https://www.newyorker.com/magazine/2017/04/03/silicon-valleys-quest-to-live-forever

Gibbs, Samuel. 2017. “Elon Musk leads 116 experts calling for outright ban of killer robots”, The Guardian, 20 August, https://www.theguardian.com/technology/2017/aug/20/elon-musk-killer-robots-experts-outright-ban-lethal-autonomous-weapons-war

Hern, Alex. 2017. “Elon Musk says AI could lead to third world war”, The Guardian, 4 September, https://www.theguardian.com/technology/2017/sep/04/elon-musk-ai-third-world-war-vladimir-putin

Kolhatkar, Sheelah. 2017. “Welcoming Our New Robot Overlords”, The New Yorker, 23 October, https://www.newyorker.com/magazine/2017/10/23/welcoming-our-new-robot-overlords?mbid=nl_Daily%2010172017&CNDID=40942023&spMailingID=12162448&spUserID=MTMzMTg0ODQwODYwS0&spJobID=1261494800&spReportId=MTI2MTQ5NDgwMAS2

Metz, Cade. 2016. “Inside Open AI, Elon Musk's Wild Plan to Set Artificial Intelligence Free”, Wired, 27 April, https://www.wired.com/2016/04/openai-elon-musk-sam-altman-plan-to-set-artificial-intelligence-free/

Meyer, David. 2017. “Alphabet's DeepMind is Using Games to Discover if Artificial Intelligence Can Break Free and Kill Us All”, Fortune, 12 December, http://fortune.com/2017/12/12/alphabet-deepmind-ai-safety-musk-games/

Tarnoff, Ben. 2017. “Robots won't just take our jobs – they'll make the rich even richer”, The Guardian, 2 March, https://www.theguardian.com/technology/2017/mar/02/robot-tax-job-elimination-livable-wage

Documentaries

The Singularity. 2012. Directed by Doug Wolens. Running time: 76 minutes. http://www.thesingularityfilm.com

Review: Cass, Stephen. 2012. “The Singularity: will humans and machines merge?” IEEE Spectrum, November: 26

© Susannah Crockford 2021

Note

This profile has been provided by Inform, an independent charity providing information on minority and alternative religious and/or spiritual movements. Inform aims to deliver accurate, balanced, and reliable data. It relies on social scientific research methods, primarily the sociology of religion. Inform welcomes feedback, comments, corrections, or further information at inform@kcl.ac.uk.